Shadow AI is the unsanctioned use of AI tools or features inside SaaS apps. It occurs when employees enable new AI capabilities or adopt AI apps without IT review or formal vetting, creating unmanaged identities and data exposure across cloud services.

This includes generative AI tools accessed through personal accounts, third-party LLM platforms used outside approved environments, and AI features activated within existing SaaS applications without security oversight.

In most cases, shadow AI is not intentional misuse. It is a byproduct of employees moving faster than governance, filling gaps between what approved tools provide and what the business demands.

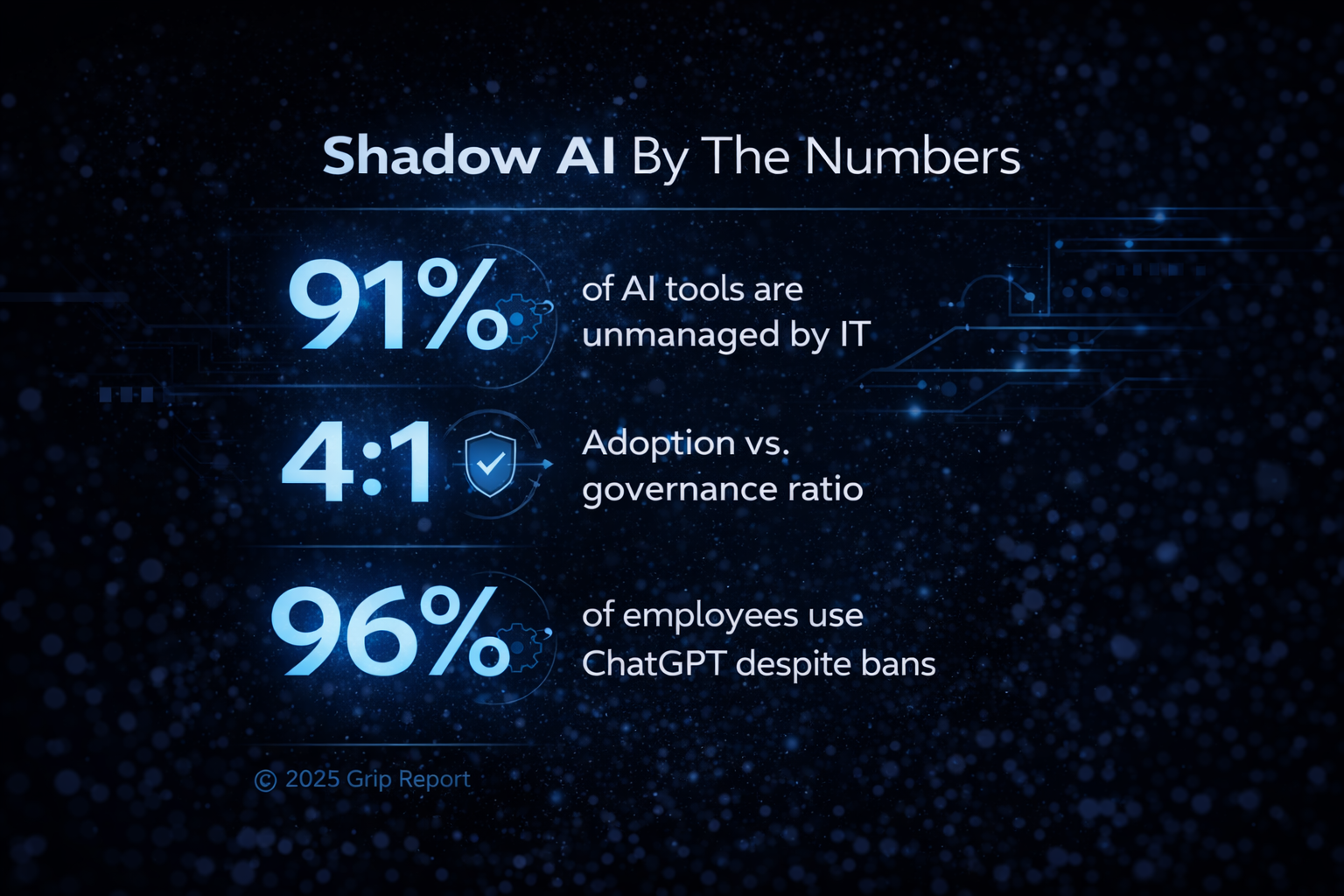

According to Grip's 2025 SaaS Security Risks Report:

These findings show AI is embedding into SaaS faster than controls can keep up, widening the visibility and governance gap.

Read the full report for more on shadow AI and shadow SaaS.

The risks of shadow AI fall into three categories.

First, data exposure. Sensitive information such as customer records, source code, or internal documents is often entered into AI tools with unclear storage, retention, or training policies.

Second, regulatory and compliance risk. When data leaves approved systems or crosses geographic boundaries without controls, organizations risk violating frameworks like GDPR or industry-specific requirements.

Third, decision risk. AI-generated outputs can be inaccurate or incomplete, and when used without validation, they can influence business decisions, customer communications, or code in ways that introduce downstream ris

The danger isn’t only the app—it’s who it connects as and what it’s allowed to do. Common risk patterns:

Shadow AI compounds shadow SaaS, expanding a web of users, apps, scopes, and data flows that evade traditional controls.

Shadow AI emerges from a simple reality: employees are expected to move faster than the systems designed to govern them. When AI tools offer immediate gains in speed, automation, or insight, adoption happens long before formal approval processes can catch up.

Access is frictionless. Most AI tools require nothing more than an email address to start, and many are embedded directly into tools teams already use. The barrier to entry is effectively zero.

At the same time, governance models are still catching up. Policies are often unclear, outdated, or too slow to evaluate new tools at the pace they appear. Blocking access rarely solves the problem; it shifts usage into personal accounts and unmanaged environments.

The result is not rogue behavior. It is a systemic gap between how work gets done and how technology is controlled.

Shadow AI shows up in everyday workflows, often in ways that feel harmless but introduce real risk.

Using ChatGPT or Gemini via personal accounts for work tasks

Employees paste customer data, internal documents, or code into public AI tools outside company control.

AI browser extensions and plugins

Tools that summarize emails, generate responses, or scrape data operate with broad access to content without security review.

Unauthorized AI automation tools

Teams connect AI tools to SaaS apps to automate workflows, often granting persistent API access and permissions.

AI features enabled inside approved SaaS apps

Applications like CRM, support, or collaboration tools introduce AI features that users activate without additional vetting or policy updates.

An example of shadow AI is any AI tool or feature used for work without IT approval or visibility. This includes ChatGPT when accessed through personal or unmanaged accounts, even if the tool itself is widely known.

Shadow agentic AI refers to autonomous AI systems that can take action across applications without direct user input or ongoing oversight.

Unlike passive AI tools that generate content or responses, agentic AI can execute workflows. It can trigger actions, move data between systems, and make decisions based on predefined goals.

When deployed without governance, these agents introduce a new level of risk. They often operate with persistent access, broad permissions, and limited visibility into what actions they take or what data they interact with.

This compounds traditional shadow AI. The risk is no longer just what a user inputs into a tool, but what an autonomous system is allowed to do across identities, applications, and APIs over time.

Managing shadow AI starts with visibility. Organizations need a clear understanding of what AI tools are in use, who is using them, and what access they have to data and systems.

Establish clear AI usage policies

Define which tools are approved, what data can be used, and where AI can be safely integrated into workflows. Policies should be practical enough to follow, not just enforceable on paper.

Provide approved alternatives

When employees have access to sanctioned AI tools that meet their needs, they are less likely to seek out unmanaged options.

Increase awareness without blocking productivity

Training should focus on how AI introduces risk, especially around data sharing and permissions, without discouraging responsible use.

Implement continuous detection and monitoring

Use SaaS and identity security tools to discover new AI tools, track usage, and evaluate permissions, tokens, and data access in real time.

Detection alone is not enough. Effective management requires the ability to enforce controls, reduce access, and bring shadow AI into a governed environment without slowing the business down.

Shadow AI starts with good intentions: speed, insight, and automation, enabled in clicks and spread across departments. Stopping it begins with visibility.

Grip discovers AI tools and newly enabled AI features, maps who owns them, analyzes permissions/scopes and data access, and recommends next actions (e.g., SSO/MFA enforcement, scope reduction, token revocation, or access removal). You regain control without stifling innovation. See how Grip reduces shadow AI risks, then take the next step and book a demo with our team.

Shadow AI in cybersecurity is the unsanctioned use of AI tools or AI features in SaaS apps without IT/security approval, creating blind spots in identity, access, and data protection.

Shadow AI typically emerges when employees or teams adopt new AI tools to automate tasks, analyze data, or enhance productivity, without going through formal review processes. It also occurs when users activate AI features in SaaS apps that were previously approved in a non-AI form, bypassing renewed risk assessment.

Unapproved AI tools can access or store sensitive data, create unmonitored accounts, and introduce unauthorized access pathways. Without visibility into how AI tools handle data or interact with other services, organizations face increased risk of data leaks, compliance violations, and unmonitored identity exposure.

Shadow AI tools may interact with corporate documents, customer records, source code, financial data, or employee credentials. When these tools are not reviewed for data protection practices, they can lead to unintended data sharing or AI model training on sensitive content.

To manage shadow AI risk, organizations need tools that automatically detect unauthorized AI tools and SaaS apps in use. Solutions like Grip help detect when a new AI tool enters a SaaS environment, who it belongs to, the risk severity of it, and recommended actions to take next, such as applying controls like SSO, MFA, or revoking access entirely. Learn more about Grip's shadow AI detection and management capabilities.

Free Guide: Modern SaaS Security for Managing GenAI Risk

AI Apps: A New Game of Cybersecurity Whac-a-Mole

Request a consultation and receive more information about how you can gain visibility to shadow IT and control access to these apps.